Website analytics tools like Google Analytics, Microsoft Clarity, and Shopify data are incredibly useful for gaining insights into user behavior. They can show us traffic patterns, user demographics, and how visitors interact with our sites.

Unfortunately, these tools are far from perfect. They often miss data, can be affected by cookies and ad blockers, and sometimes misinterpret user actions. While analytics are beneficial, they’re not always fully accurate, and treating them like a crystal ball can give you an inaccurate idea about the state of your website.

Usually, when we peek at our analytics, we want to know if we’re doing better than we did last year, or last quarter, or last month.

Can it give us a helpful snapshot into how we’re doing? Sure. But there are so many things that change in 365 days: consumer behavior, your offer, your competitor’s offer, and more.

So if you’re trying to track and measure — if you want to know “Will this tweak on my website yield an uplift in conversions?” — comparing one range of time to a previous range of time will never be fully accurate.

To get a much more accurate answer to your question: “Will this change on my website lead to more conversions?” — you want to compare now data to now data.

Talking directly to customers and clients provides invaluable insights that numbers alone can’t offer. These conversations can reveal the why behind user behavior and highlight pain points that data might miss.

But qualitative research isn’t foolproof either. It can be biased based on who you talk to and how you interpret their feedback. Just like with analytics, it’s a piece of the puzzle, but not the whole picture.

Website experimentation — also called conversion rate optimization, or CRO — lets you test your ideas in real-world scenarios rather than making assumptions.

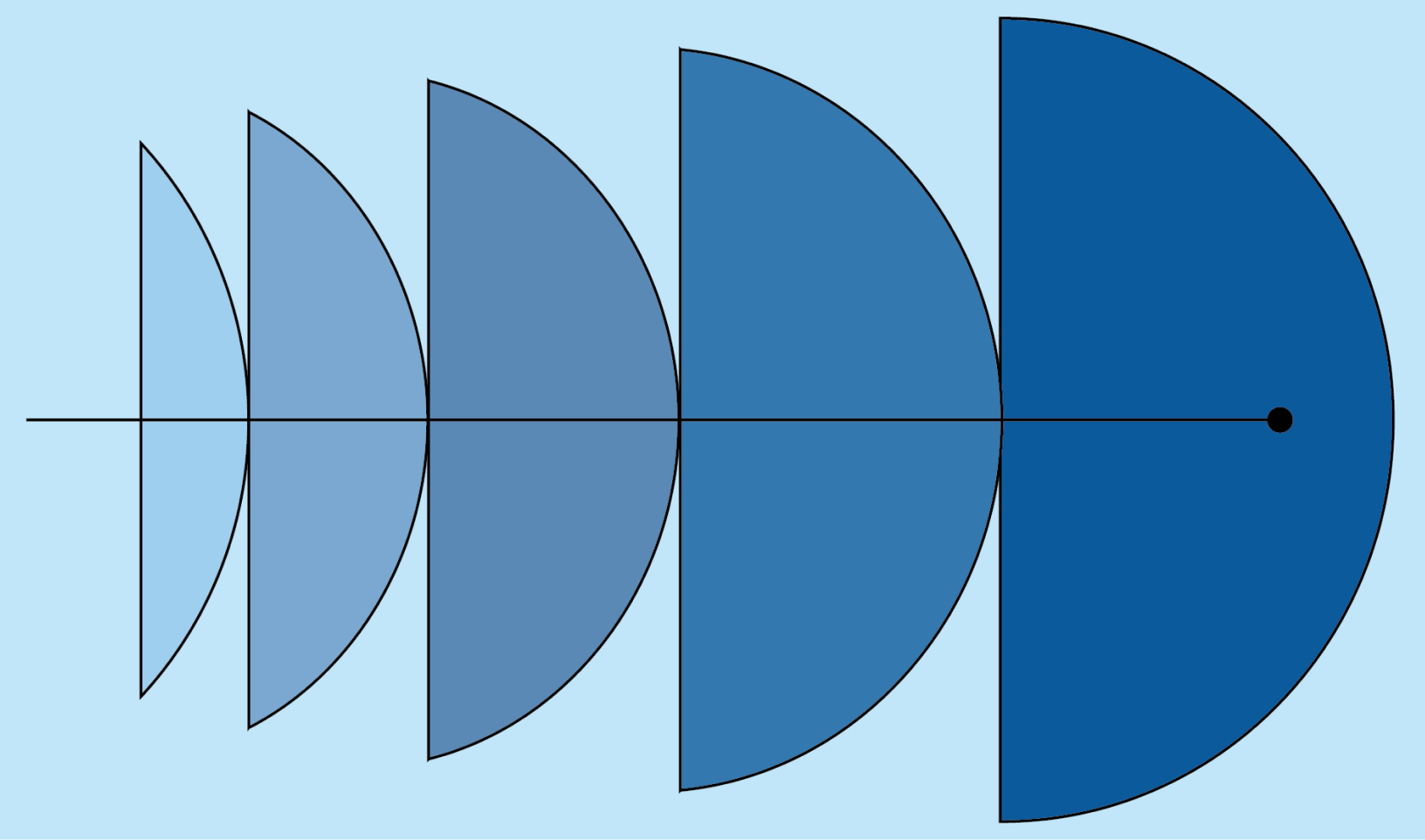

Instead of comparing now to last month or last year, with the classic A/B test experiment, you can compare now to now.

Here’s the basic A/B testing process:

Experimentation provides concrete evidence of what works and what doesn’t. Instead of assuming your data is correct, you’re testing it out — and getting far more clarity on what actually converts.

To get statistically significant results from A/B testing, you need a decent amount of traffic and conversions. Generally, you should have at least 1,000 conversions a month to try A/B testing and get statistically significant/accurate results. Without this volume, your results might not be reliable.

If your website doesn’t have a lot of traffic, you can still experiment effectively.

A few ideas: